How Artificial Intelligence Works (in “very, very” short)

Modern AI can feel like a magic trick. It generates stunning art, writes human-like text, writes code and devises novel strategies for complex games. But the truth is that its most powerful secret is

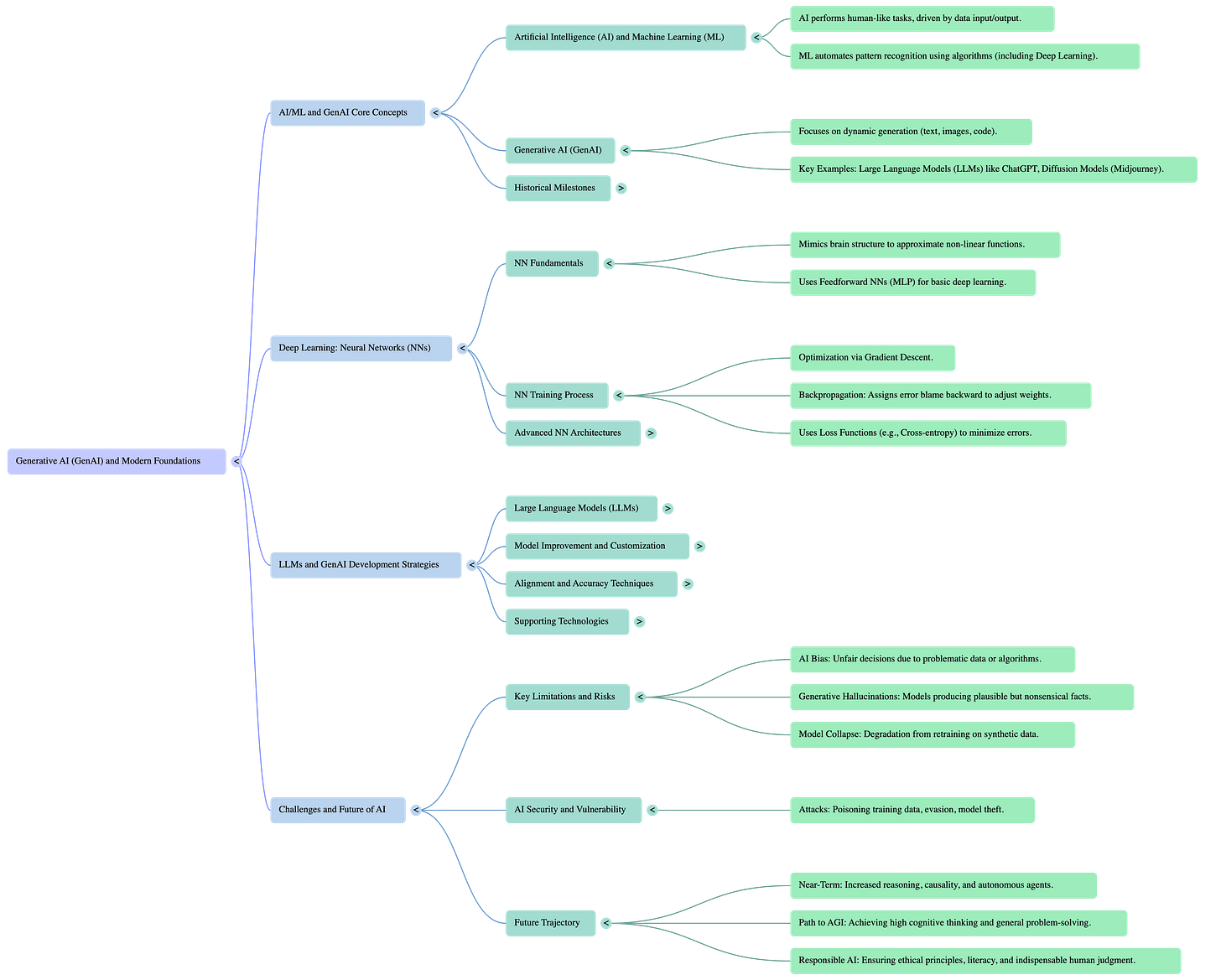

The beginning

First, modern AI is not new. It is a common misconception that the generative AI boom is the result of brand-new invention, but the reality is more nuanced. The AI revolution is not a result of a single invention, but an assembly of code from the last decade. Today’s most advanced Large Language Models (LLMs) are built by combining and refining innovations from prior scientific breakthroughs, which include transfer learning (in 2012), self-attention (from 2015), transformers and reinforcement learning algorithms like Proximal Policy Optimization (both from 2017). AI progress is therefore best understood as finding innovative ways to assemble cumulative scientific work. Equally, the next big thing in AI is unlikely to be an entirely new concept, but something built from the tools that we are making today.

Second, AI taught itself creativity by playing an ancient game. This happened in March 2016, when DeepMind’s AlphaGo AI defeated Lee Sedol, one of the world’s top champions in the complex board game of Go. It was then that AI showed that it could do more than calculate. The reason is that playing Go needed it to invent. The match became legendary for the so called “Move 37“. This was an action so unconventional that it had an estimated 1-in-10,000 likelihood of being played by a human expert. And this was not just clever, but a demonstration of emergent creativity. To achieve this, AlphaGo was first trained on 160,000 games played by human experts. It then honed its abilities by playing 30 million distinct games against itself. In doing so, it moved beyond mimicking human strategy to devise its own. Move 37 temain iconic because it crystallized AI’s potential for genuine ingenuity, proving it could discover novel solutions that lie outside the bounds of established human knowledge. I strongly recommend to anyone interested to watch the full AlphaGo movie, free on YouTube.

Third, AI art is made by destruction. The process is a paradox because for art tools like DALL-E and Midjourney to turn a simple text prompt into a photorealistic image, they first had to learn how to reverse-engineer chaos. The technology behind this is a type of algorithms called diffusion models. Their method works in two steps. First, the Forward Process (the destruction step) is when the model is trained by taking a clean image and systematically adding layers of random (called Gaussian) noise. This is when step-by-step the original image is completely destroyed until only static remains. Then, the Reverse Process (the reconstruction step) is when AI learns to reverse the process. It does so by predicting and removing the noise at each step. To generate a new image, the AI starts with pure noise and applies the learned denoising, gradually sculpting a novel image that matches the user’s prompt. This method was a breakthrough because it allowed diffusion models to deliver more realistic results than the generative adversarial networks (GANs) that preceded them. The creative act is fundamentally rooted in a deep understanding of destruction. By learning how to reverse chaos, the AI gains the ability to generate order and beauty from nothing.

AI “hallucinations” are a feature, not a bug

When an AI confidently states false information (an hallucination), it is evidently tempting to call it a “bug.” In reality, it is a direct consequence of its very design, because an AI does not know the difference between fact and fiction. AI only knows what is plausible based on statistics. The reason hallucinations occur is because LLMs are autoregressive. This means that the approach involves generating text by sequentially predicting each subsequent token (tokens are used to break text segments into their own discrete elements). Their primary goal is to predict the next most plausible word in a sequence based on the massive dataset they were trained on. Crucially, this training objective makes no distinction between fact and fiction. It is crucial to understand that AI does not understand truth. It only understands patterns. This means that from the AI’s perspective, there is no difference between a hallucination and an accurate, factual statement. This further reminds us to treat AI not as an infallible oracle but as a powerful probabilistic tool that requires human verification and judgment.

Conclusion

Following this arc, we can have a clearer picture of AI. It is a technology whose power and peril are direct consequences of its design. Understanding how it works demystifies the hype and empowers us to use it more thoughtfully. As AI pioneer Fei-Fei Li notes, the human element is, and always has been, central to this technology. I often tell my students not to be misled by the name “artificial intelligence” because there is nothing artificial about it. AI is made by humans, intended to behave by humans, and ultimately, to impact humans’ lives and human society. Knowing that AI is prone to brilliant creativity, but fundamentally designed to hallucinate, we must perhaps change our definition of “collaboration” with it.